精度等级为 产品分类 提供了实用指南,在实际应用中,精度等级不可被认为是整体精度。因为其同时还会受到其他因素影响。

案例::

我们来看 T10F 扭矩传感器的两个不同版本, "S" (标准版本) 以及 "G" (即减小包括滞后的线性误差) ,量程为 100 N·m 到 10 kN·m.

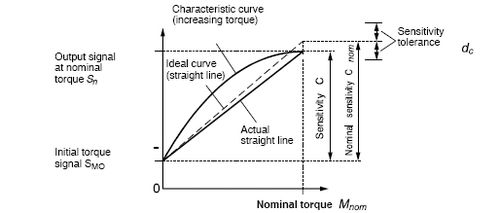

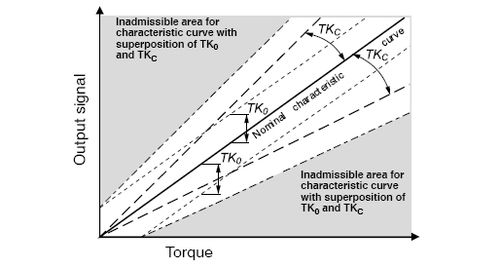

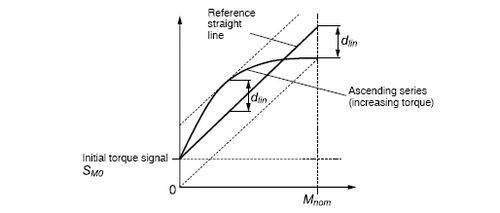

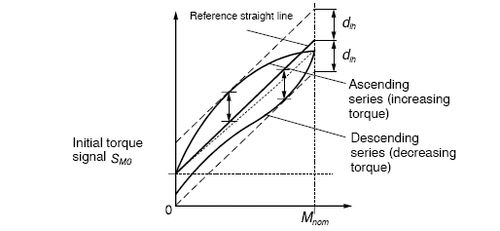

在技术参数表中, "S" 版本: 温度对零点的影响(TK0) 精度为: 0.05 %, 温度对灵敏度影响为 (TKC) 为 0.1 % , 包括滞后的线性误差 (dlh). 为±0.1 %,由于后两个值,所以精度等级所以为 0.1。而版本 "G" 对包括滞后的线性偏差 (dlh) 进行了改进,其值仅有 0.05 %。

但 温度对灵敏度的影响 (TKC) 仍然是 0.1%。因此,版本 “G” 的精度等级仍然为 0.1。

显然, 版本 "G" 没有对精度等级产生任何影响。但是不同的是:但仅 TKC. 这个值会对测量产生较大影响,但对某些应用来说,例如在部分负载范围内进行测量时,其影响要小得多。